Artificial intelligence has fundamentally altered the economics of software development. Large Language Models embedded into coding environments now generate working code at a pace that would have been inconceivable just a few years ago. For firms facing competitive pressure, talent shortages, and compressed delivery timelines, AI-assisted coding appears to offer an almost frictionless productivity upgrade.

Yet history from complex, high-risk systems offers a consistent warning: when speed increases faster than governance, fragility follows. The core question, therefore, is not whether AI makes software development faster—it demonstrably does—but whether organisations are redesigning their development systems to absorb that speed without compromising security, maintainability, and institutional control.

This article examines what the evidence actually shows and, more importantly, how global best practices are emerging to manage AI-assisted development responsibly.

What Productivity Gains Really Represent

The headline statistic driving AI adoption comes from Peng et al. (2023), who found that developers using GitHub Copilot completed coding tasks nearly 56 percent faster than those working without AI assistance. Completion rates also improved modestly. These findings are robust and widely replicated.

However, the metric being measured is narrow. Task completion primarily captures how quickly developers can translate intent into syntactically correct code. It does not measure architectural coherence, security robustness, or long-term maintenance costs. In practice, AI excels at accelerating construction, not judgment.

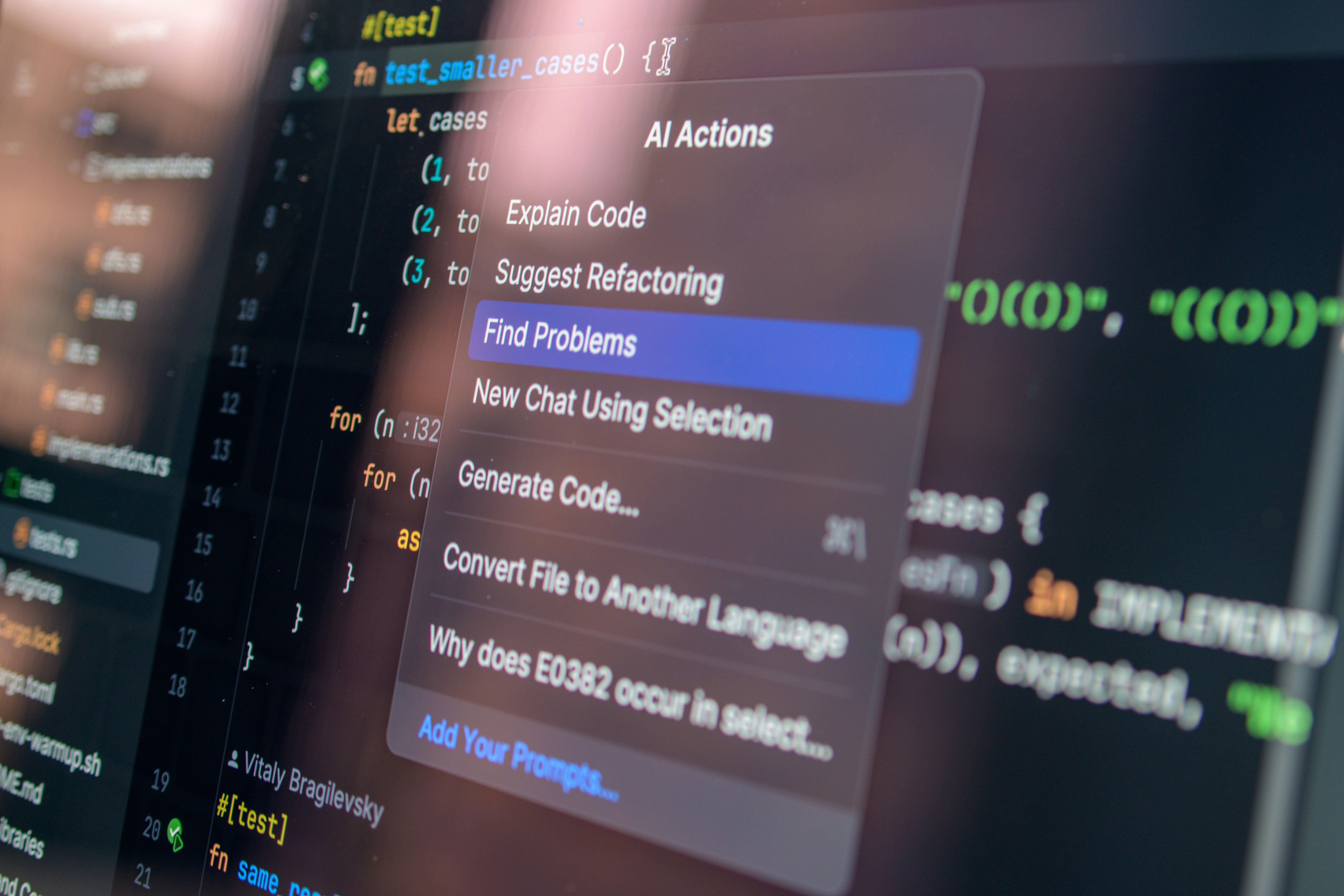

Organisations that have adopted AI successfully recognise this distinction. They treat AI-generated output as an advanced drafting tool—useful for scaffolding, boilerplate, and routine logic—while reserving system design, security decisions, and final integration for human oversight. Productivity gains are therefore real, but they are conditional, not automatic.

The Structural Risk Beneath the Speed

The most serious risk posed by AI-assisted coding is not the occasional insecure function, but the widening gap between code creation and code verification. Empirical audits of AI-generated code, including Pearce et al. (2022), show that language models can reproduce common vulnerabilities when placed in risky contexts. This outcome is unsurprising given that LLMs are trained on public repositories containing a mix of best practices and poor-quality code.

What changes with AI is scale. Code can now be generated faster than teams can reasonably review it using traditional methods. Security audits, threat modelling, and architectural validation remain labour-intensive, while generation is automated. This asymmetry creates a systemic exposure: risk accumulates invisibly until it surfaces as a breach, failure, or runaway maintenance cost.

High-reliability organisations understand that this is not a tooling problem but a workflow design problem.

How Leading Organisations Are Redesigning Development Systems

Rather than rejecting AI-assisted coding, firms operating in regulated or high-risk environments are adapting their systems to discipline speed. One widely adopted practice is to treat AI-generated code as untrusted input. Such code is clearly flagged, subjected to enhanced review requirements, and restricted from use in sensitive modules such as authentication, cryptography, or financial transactions unless additional safeguards are met.

Another critical adaptation involves shifting verification earlier in the pipeline. Automated testing, static analysis, and dependency scanning are increasingly used as the first line of defence, reducing the burden on human reviewers. Evidence from enterprise DevSecOps adoption suggests that early automated checks can reduce downstream vulnerabilities by up to half, even before manual inspection begins.

Some organisations have gone further by structurally separating systems into zones based on risk. Rapid iteration is encouraged in low-risk layers such as user interfaces or internal tools, while core logic and safety-critical components remain tightly governed. This approach mirrors safety zoning used in aviation, healthcare, and energy systems and reflects an understanding that not all software components carry equal consequences.

Global Case References

Finance: From blanket bans to “controlled enablement”

Financial institutions were among the earliest to restrict public LLM tools because the immediate risk was not just insecure code, but data leakage and uncontrolled third-party processing. Reports from 2023 showed multiple banks—including Goldman Sachs and Citigroup—restricting employee access to ChatGPT as part of third-party risk controls.

What’s more interesting (and more “best practice” than the bans) is the institutional transition many banks are now making: moving from “no AI” to governed AI. IBM’s analysis describes a pattern where firms restrict public tools but then deploy corporate interfaces / controlled environments so employees can use generative AI with auditability and policy guardrails. It notes JPMorgan’s move toward an internal interface after earlier restrictions.

On the adoption side, it’s also important to capture that finance is not uniformly anti-AI. JPMorgan’s own Payments developer blog describes using GitHub Copilot within their development workflow to improve efficiency—illustrating that the mature approach is not rejection, but tooling within governance.

Best-practice pattern emerging in finance: Banks are treating AI coding assistants like any other high-risk vendor/tool: allowed only when routed through enterprise controls (access management, logging, policy enforcement), and often with restrictions around sensitive code paths and data exposure.

Cloud providers: “Verification debt” becomes the new operational risk

Cloud platforms are shaping practice because they set the default tooling for modern DevOps. A major concept crystallising the risk side is “verification debt”—the idea that when AI increases code output, organisations accumulate an ever-growing backlog of code that was generated faster than it was verified. This concept has been publicly discussed by AWS CTO Werner Vogels and has been echoed in industry reporting on developer behaviour and verification gaps.

AWS’s response direction is instructive because it points to a best-practice trajectory: pairing code generation with built-in security scanning and attribution controls. AWS describes integrating CodeWhisperer with security scanning and “best practice” checks via tools such as CodeGuru Security, explicitly positioning AI assistance alongside automated verification.

AWS also highlights reference tracking (to detect suggestions resembling training data / open-source patterns) and security scanning capabilities as part of responsible enterprise use.

Best-practice pattern emerging in cloud ecosystems: AI coding assistants are increasingly being packaged with guardrails—security scanning, policy controls, and provenance/reference tracking because the enterprise problem is no longer “writing code” but trusting code at scale.

Public-sector IT: Trial-first adoption with central guidance and measurable outcomes

Public-sector IT provides a valuable “best practice laboratory” because the constraints are real: legacy systems, procurement rules, security mandates, and large developer populations.

A strong reference case is the UK government’s trial of AI coding assistants. Reporting on the trial indicates that over 1,000 developers across ~50 departments tested assistants (including GitHub Copilot and others) and recorded measurable productivity gains (roughly an hour saved per day on average). However, the trial also found that only a minority of AI-generated code was accepted without edits—an important signal of healthy skepticism and review culture, which is exactly the governance posture your article argues for.

The UK also published government guidance specifically on using AI coding assistants in government, framing the challenge as enabling benefits while minimizing risks—especially around unregulated “shadow AI” usage. Separately, the UK NCSC has published guidance on secure AI system development, reinforcing a security-by-design approach rather than ad hoc adoption.

Best-practice pattern emerging in public systems: Adopt through controlled pilots → publish shared guidance → scale with security guardrails and training, to prevent fragmented “shadow IT” adoption.

Taken together, these cases show a convergence: the most mature adopters are not debating whether AI coding is useful; they are building governance so that speed does not outpace verification, and so that institutional risk is managed rather than postponed.

Technical Debt as a Measurable Risk, Not an Afterthought

Aggregate repository analysis, including GitClear’s large-scale studies, indicates rising code churn in AI-heavy environments. Churn is not inherently negative, but persistent high churn signals weak initial quality and growing technical debt. The difference between mature and fragile organisations lies in how this signal is treated.

Best-practice firms explicitly track churn, refactoring rates, and maintenance effort as performance indicators. AI usage is evaluated not just on delivery speed but on its downstream impact on system stability and support costs. In this model, technical debt is no longer an abstract concern—it becomes a governed variable.

Addressing the Human Factor

Research has also highlighted a psychological dimension to AI-assisted development. Studies such as Perry et al. (2023) show that developers using AI tools often report higher confidence in their code, even when security quality is lower. This “confidence–competence gap” represents a subtle but serious risk.

Organisations that respond effectively do not rely on warnings alone. They retrain developers to treat AI outputs as provisional, encourage deliberate scepticism, and embed review rituals that make questioning AI-generated code a norm rather than an exception. In these environments, AI is positioned as a fast but fallible assistant not an authority.

Security Threats and the Importance of Defence-in-Depth

Advanced threats such as polymorphic malware, demonstrated by research from HYAS Labs, underscore why AI governance must extend beyond development teams. When attackers can use the same tools to mutate malicious code at scale, security strategies based solely on known signatures become inadequate.

Global best practices increasingly rely on behaviour-based detection, runtime monitoring, and layered defence mechanisms. These approaches do not eliminate risk, but they reduce the likelihood that AI-generated complexity overwhelms institutional safeguards.

The lesson from global best practices is not that AI-assisted coding is dangerous, but that unmanaged acceleration is. Organisations that succeed with AI do so by redesigning workflows, metrics, and governance structures to reflect new realities.

Speed remains valuable, but it must be paired with verification, accountability, and institutional learning. Where these elements are present, AI enhances resilience. Where they are absent, it amplifies risk.

AI-assisted coding represents a structural shift in how software is produced. Its long-term impact will depend less on the sophistication of models and more on the quality of systems into which they are embedded.

Global best practices demonstrate that it is possible to capture productivity gains while maintaining security and control—but only when velocity is governed rather than celebrated uncritically. The future of software development will be shaped not by how fast code can be generated, but by how intelligently organisations adapt to that speed.

Tatvita Insight:

Tatvita Analysts examines technological change through the lens of resilience and applied governance. The organisations that thrive in an AI-accelerated world are not those that move fastest, but those that design systems capable of sustaining speed without breaking.

Leave a Reply